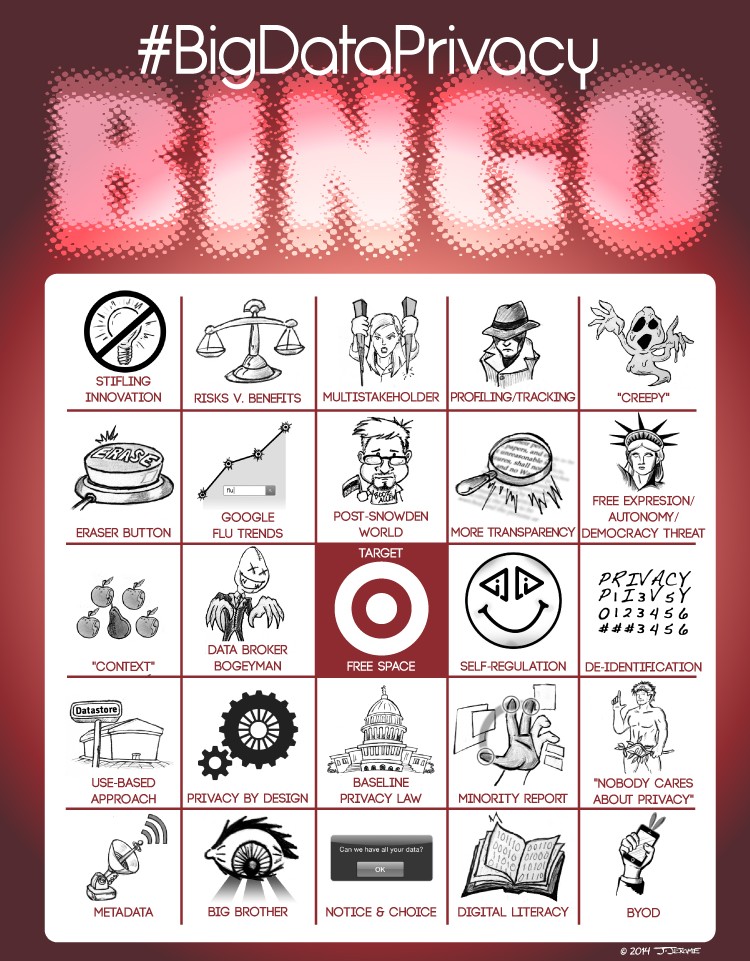

After a three year dry spell, OkCupid’s fascinating OkTrends blog roared to life on Monday with a post by Christian Rudder, cofounder of the dating site. Rudder boldly declared that his matchmaking website “experiment[s] on human beings.” His comments are likely to reignite the controversy surrounding A/B testing on users in the wake of Facebook’s “emotional manipulation” study. This seems to be Rudder’s intention, writing that “if you use the Internet, you’re the subject of hundreds of experiments at any given time, on every site. That’s how websites work.”

Rudder’s announcement detailed a number of the fascinating ways that OkCupid “plays” with its user’s information. From removing text and photos from people’s profiles to duping mismatches into thinking they’re excellent matches for one another, OkCupid has tried a lot of different methods to help users find love. Curiously, my gut reaction to this news was that it was much less problematic that the similar sorts of tests being run by Facebook – and basically everyone involved in the Internet ecosystem.

After all, OkCupid is quite literally playing Cupid. Playing God. There’s an expectation that there’s some magic to romance, even if it’s been reduced to numbers. Plus, there’s the hope these experiments are designed to better connect users with eligible dates, while most website experiments are to improve user engagement with the service itself. Perhaps all is fair in love, even if it requires users to divulge some of the most sensitive personal information imaginable.

Whatever the ultimate value of OkCupid’s, or Facebook’s, or really any organization’s user experiments, critics are quick to suggest these studies reveal how much control users have ceded over their personal information. But I think the real issue is broader than any concern over “individual control.” Instead, these studies beg the question of how much technology – fueled by our own data – can shape and mediate interpersonal interactions.

OkCupid’s news immediately brought to mind a talk by Patrick Tucker just last week at the Center for Democracy & Technology’s first “Always On” forum. Tucker, editor at The Futurist magazine and author of The Naked Future, provided a firestarter talk that detailed some of the potential of big data to reshape how we live and interact with each other. At a similar TEDx talk last year, he posited that all of this technology and all of this data can be used to give individuals an unprecedented amount of power. He began by discussing prevailing concerns about targeted marketing: “We’re all going to be faced with much more aggressive and effective mobile advertising,” he conceded, ” . . . but what if you answered a push notification on your phone that you have a 60% probability of regretting a purchase you’re about to make – this is the antidote to advertising!”

But he quickly moved beyond this debate. He proposed a hypothetical where individuals could be notified (by push notification, of course) that they were about to alienate their spouse. Data can be used not just to set up dates, but to manage marriages! Improve friendships! For an introvert such as myself, there’s a lot of appeal to these sorts of applications, but I also wonder when all of this information becomes a crutch. As OkCupid explains, when its service tells people they’re a good match, they act as if they are “[e]ven when they should be wrong for each other.”

Occasionally our reliance on technology crosses not just some illusory creepy line, but fundamentally changes our behavior. Last year, at IAPP’s Navigate conference, I met Lauren McCarthy, an artist researcher in residence at NYU, who discussed how she used technology to augment her ability to communicate. For example, she demoed a “happy hat” that would monitor the muscles in your face and provide a jolt of physical pain if the wearer stopped smiling. She also explained using technology and crowd-sourcing to make her way through dates. She would secretly video tape her interactions with men in order to provide a livestream for viewers to give her real time feedback on the situation. “He likes you.” “Lean in.” “Act more aloof,” she’d be told. As part of the experiment, she’d follow whatever directions were being beamed to her.

I asked her later whether she’d ever faced the situation of feeling one thing, e.g., actually liking a guy, and being directed to “go home” by her string-pullers, and she conceded she had. “I wanted to stay true to the experiment,” she said. On the surface, that struck me as ridiculous, but as I think on her presentation now, I wonder if she was forecasting our social future.

Echoing OkCupid’s results, McCarthy also discussed a Magic 8 ball device that a dating pair could figuratively shake to direct their conversation. Smile. Compliment. Laugh, etc. According to McCarthy, people had reported that the device had actually “freed” their conversation, and helped liberate them from the pro forma routines of dating.

Obviously, we are free to ignore the advice of Magic 8 balls, just as we can ignore push notifications on our phones. But if those push notifications work? If the algorithmic special sauce works? If data provides “better dates” and less alienated wives, why wouldn’t we use it? Why wouldn’t we harness it all the time? From one perspective, this is the ultimate form of individual control, where our devices can help us to tailor our behavior to better accommodate the rest of the world. Where then does the data end and the humanity begin? Privacy, as a value system, pushes up against this question, not because it’s about user control but because part of the value of privacy is in the right to fail, to be able to make mistakes, and to have secret spaces where push notifications cannot intrude. What that spaces looks like, however, when OkCupid is pulling our heartstrings.